While large language models have been dominating the headlines, a quieter revolution has been unfolding behind the scenes: machine learning models are becoming competitive in weather forecasting [Mas23R].

Traditional numerical weather prediction (NWP) relies on meticulously crafted physics equations to simulate atmospheric behavior. These equations, transformed into sophisticated computer algorithms, require substantial computational resources, typically supercomputers, to generate weather forecasts. While this traditional approach has been a triumph of science and engineering, this approach is labor-intensive, demanding expertise in equation design and algorithm development, along with significant computational power for accurate predictions.

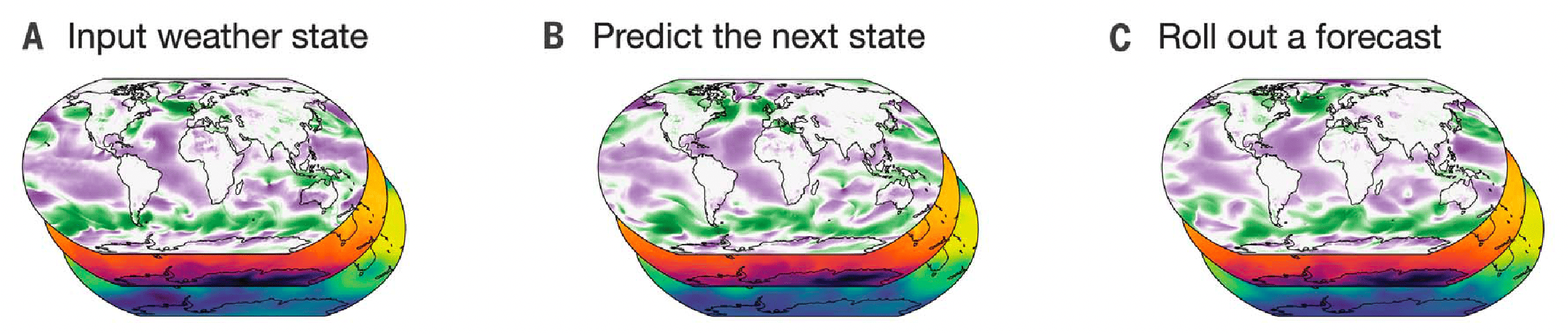

Fig. 1 [Lam23G] Model schematic. (A) The input weather state(s) are defined on a 0.25° latitude-longitude grid comprising a total of 721 × 1440 = 1,038,240 points. Yellow layers in the close-up pop-out window represent the five surface variables, and blue layers represent the six atmospheric variables that are repeated at 37 pressure levels (5 + 6 × 37 = 227 variables per point in total), resulting in a state representation of 235,680,480 values. (B) GraphCast predicts the next state of the weather on the grid. (C) A forecast is made by iteratively applying GraphCast (GC) to each previous predicted state, to produce a sequence of states that represent the weather at successive lead times.

Machine learning offers a different approach: using historical data instead of physical equations, deep learning models learn to predict the weather efficiently. As another part of a series of papers, predominantly driven by large technology companies such as Huawei [Bi23A] and NVIDIA [Pat22F], Google DeepMind recently published a paper on GraphCast in Science [Lam23G], highlighting the rapid progress in the quality of ML-based weather forecasts.

Fig. 2 [Lam23G]

Global skill and skill scores for GraphCast and HRES in 2018.

(A) RMSE skill (y axis) for GraphCast (blue lines) and HRES (black lines),

on Z500, as a function of lead time (x axis). Error bars represent 95%

confidence intervals. The vertical dashed line represents 3.5 days, which is

the last 12-hour increment of the HRES 06z/18z forecasts. The black line

represents HRES, where lead times earlier and later than 3.5 days are from the

06z/18z and 00z/12z initializations, respectively.

Fig. 2 [Lam23G]

Global skill and skill scores for GraphCast and HRES in 2018.

(A) RMSE skill (y axis) for GraphCast (blue lines) and HRES (black lines),

on Z500, as a function of lead time (x axis). Error bars represent 95%

confidence intervals. The vertical dashed line represents 3.5 days, which is

the last 12-hour increment of the HRES 06z/18z forecasts. The black line

represents HRES, where lead times earlier and later than 3.5 days are from the

06z/18z and 00z/12z initializations, respectively.GraphCast is a Graph Neural Network (GNN) trained on four decades of weather reanalysis data from ECMWF’s ERA5 dataset [Her20E]. This trove is based on historical weather observations such as satellite images, radar, and weather stations using a traditional NWP to ‘fill in the blanks’ where the observations are incomplete, to reconstruct a rich record of global historical weather. GraphCast is built for medium-range weather forecasts, trained to predict 6-hour increments with auto-regressive roll-outs to compute forecasts up to 10 days at 0.25° resolution globally. The Code of GraphCast is open-sourced, and the model is already integrated into ECMWF’s forecasting system, which provides a web interface to explore the predictions.

Remarkably, GraphCast is able to make predictions in under 1 minute, much faster than the industry gold-standard weather simulation system HRES, and with unprecedented accuracy. In a comprehensive performance evaluation, GraphCast provided more accurate predictions on more than 90% of 1380 test variables and forecast lead times (for more details, see [Lam23G]). The model is even able to predict extreme events such as tropical cyclones, though not explicitly trained on them.

This development represents a significant milestone in the evolution of scientific machine learning, as ML models begin to surpass the performance of traditional physics-based models. In 2018, ECMWF did not anticipate such a shift occurring in the near future [Mas23R]. However, the situation changed rapidly between February 2022 and April 2023, essentially attributed to the establishment of comprehensive benchmarks such as WeatherBench [Ras23W].

It is surprising how an ML model not only generates predictions for a complex physical system much faster, but is also more accurate! This likely stems from its ability to discern patterns in the data that humans haven’t yet discovered or included in modeling. Such capabilities grant ML-based methods considerable advantage, but these advancements also do not herald the obsolescence of conventional modeling. Physically-based models, such as ECMWF’s Integrated Forecasting System (IFS), have been the key ingredient in generating the ERA5 dataset and provide the initial conditions required to run these ML models.

We believe GraphCast marks a turning point in computational modeling, and we will see more and more ML-based models outperforming traditional numerical methods for modeling complex dynamical systems, both in terms of accuracy and computational efficiency.