The majority of recent machine learning research has been centered on enhancing model performance, often assuming the quality of the training and testing data as a given. However, as illustrated in Figure 1, the typical workflow for real-world machine learning applications is primarily concerned with data collection, labeling, and cleaning. These tasks are frequently manual and time-intensive, and as models become more intricate and datasets increase in size the time and computational resources needed are becoming the primary constraints. This situation is likely to worsen, as we discussed in a recent paper pill.

Figure 1. From [Maz23D]: an example of a typical ML model training workflow. Notice that data preparation is often the most involved and time-consuming part of the process.

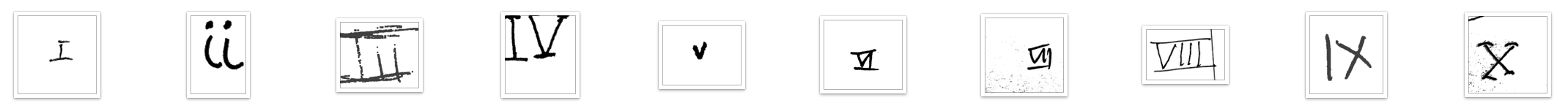

The “Data-Centric AI challenge” (DCAI)", which gained significant attention for its focus on improving data quality, was a notable event launched at the NeurIPS 2021 conference. The competition’s objective was to train the most effective model for classifying images of Roman numeral digits (see figure for examples). Importantly, the participants’ task was to select the best training samples while the model architecture, training hyperparameters and dataset size remained unchanged. The outcomes were quite remarkable, as the majority of entries significantly improved upon the baseline accuracy achieved by random training set selection. The winners achieved an accuracy of $85%$, a substantial increase from the baseline of $64%$, approaching the human-level performance of $90%$.

The most effective techniques included data augmentation, removal of inaccurate labels or noisy images, adding specific samples to better illustrate edge cases (the so called “long-tail”, see our pill on model memorization) or correcting class imbalance. Some detailed info from the winning teams can be found in the related blog post.

Figure 2: Examples of input images in the DCAI 2021 (From the competition submission worksheet ).

Inspired by the outcome of the DCAI challenge, a new set of benchmarks for data-centric evaluation, known as DataPerf, was introduced at the NeurIPS 2023 conference [Maz23D]. DataPerf provides a diverse array of problems that mirror real-world scenarios: its applications span across fields such as speech, vision and generative model prompting. Among the initial selection, the following challenges caught my attention:

- Data Selection for Vision: The importance of large datasets for complex computer vision tasks is undeniable. While sourcing high-resolution images from the internet is straightforward, the process of labeling them is often lengthy, tedious, and costly. This competition aims to alleviate such issues by focusing on data selection. Participants are tasked with identifying the most relevant images to label from a large candidate pool (the Open Images V6 dataset). With fixed concepts such as “sushi” or “hawk” and some positive examples, the objective is to find the best positive and negative images within a set budget. Submissions include both the selected training set and a description of the method.

- Debugging for Vision: Manual image labeling is not only time-consuming and costly, but also susceptible to errors. The objective of this competition is to devise the most effective strategy for pinpointing the harmful data points within a noisy and corrupted dataset. The evaluation of submissions hinges on a ranking system for the provided samples: an identical classifier architecture is retrained on an increasing number of top-ranked samples, and the mean accuracy on the test set is utilized as an indicator of the quality of image selection.

- Data Acquisition : Acquiring datasets from “data brokers” is a prevalent practice in the industry, although it can be quite costly and the resulting accuracy can fluctuate significantly. As a result, it’s common to initially buy a small sample from various brokers and assess their quality before making a larger investment. The objective of this competition is to determine the optimal strategy for buying data from multiple vendors, each offering different data accuracy and pricing. Specifically, participants are given a few samples, summary statistics, and pricing functions, and they must devise the best acquisition strategy to optimize the accuracy of a classifier within a set budget.

You can find all existing and new competitions, along with details about evaluation tools, leaderboards, and documentation on the Dynabench platform. Dynabench and the DataPerf are hosted and maintained by the MLCommons Association, a non-profit organization dedicated to accelerating innovation in machine learning for the benefit of both research and industry. Specifically, the MLCommons Data-Centric ML working group is actively involved in maintaining and developing new benchmarks for data-centric machine learning.

You might have noticed that the DataPerf benchmarks are a perfect fit for Data valuation. This is described in our recent blog Applications of data valuation in machine learning. If you want to apply these ideas in the competition, you can try out pyDVL: the python Data Valuation Library.