One of this year’s ICML Outstanding Paper Awards is received by the paper “Learning-Rate-Free Learning by D-Adaptation” [Def23L]. Defazio et al. propose D-Adaptation, an approach for setting the learning rate of stochastic gradient methods automatically. It works without back-tracking, line searches, or additional function evaluations, and asymptotically achieves the optimal rate of convergence for minimizing convex Lipschitz functions. Thus, D-Adaptation removes the need for tuning the learning rate, which is a major practical advantage.

The paper presents extensive experiments for SGD and Adam variants of the method, where the method automatically matches hand-tuned learning rates across more than a dozen diverse machine learning problems, including large-scale vision and language problems.

Figure 2 [Def23L]: Vision experiments.

An open-source implementation is available as a drop-in replacement for PyTorch optimizers, which makes it very easy to use the new method in existing machine learning projects. This, together with the excellent performance, makes D-Adaptation an important addition to the toolbox of machine learning practitioners.

The Idea of D-Adaptation

To understand the approach, consider the unconstrained convex minimization problem $$ \min_{x \in \mathbb{R}^p} f(x), $$ where $f$ has Lipschitz constant $G$ and a non-empty set of minimizers. Gradient descent, starting at point $x_0$, produces iterates $$ x_{k+1} = x_k - \lambda_k g_k $$ where $g_k$ is the gradient of $f$ in $x_k$. Here, the learning rate $\lambda_k$ is the main quantity controlling whether and how fast the sequence ${x_k}$ converges.

Setting $\lambda_k$ optimally requires knowledge of the distance to a solution. Let $x_\star$ be a minimizer of $f$ and $D$ be the associated distance $D = \| x_0 - x_\star \|$. A known result in optimization (see e.g. [Nes18L]) is that using a fixed step size of $$ \lambda_k = \frac{D}{G\sqrt{n}}, $$ the average iterate $\hat x_n = \frac{1}{n+1}\sum_{k=0}^{n} x_k$ converges with worst-case optimal rate $$ f(\hat x_n) - f(x_\star) = \mathcal{O}\left(\frac{DG}{\sqrt{n}}\right). $$

Setting this optimal step size requires knowledge of $D$ and $G$. Adaptivity to the Lipschitz constant $G$ can be achieved using a number of approaches, e.g., AdaGrad-Norm step sizes $$ \lambda_k = \frac{D}{\sqrt{\sum_{i=0}^k \| g_i \|^2}}. $$

However, $D$ still plays the key role of the numerator in the theoretical step size.

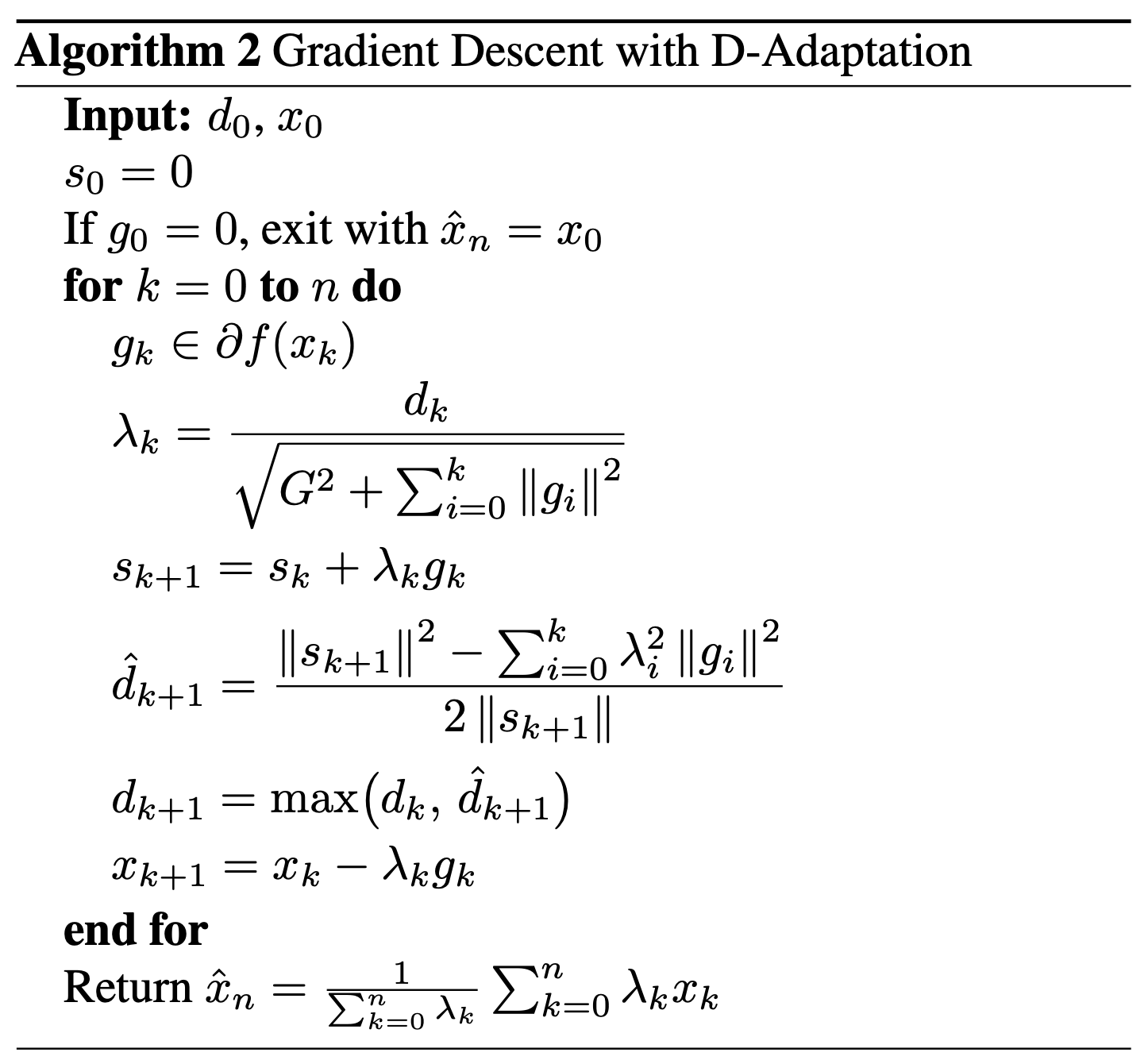

The key idea of the paper is to replace $D$ with a successively better lower bound on this distance to the solution. Several lower bounds are proposed, for instance, $$ D \geq \hat d_{k+1} = \frac{\| s_{k+1} \|^2 - \sum_{i=0}^k \lambda_i^2 || g_i ||^2}{ 2 \| s_{k+1} \| }, $$ where $s_{k+1} = s_k + \lambda_k g_k$ and $s_0 = 0$. The resulting Gradient Descent with D-Adaptation algorithm is proved to be asymptotically as fast as using the optimal lr.

It is worth mentioning that the authors have already proposed and implemented

improvements of D-Adaptation, in particular Prodigy

[Mis23P], which has better asymptotic rates and

adapts the lr faster in practice.